A Recap of AI Citation Data Research and Where Your Search Strategy Needs to Pivot

May 6, 2026

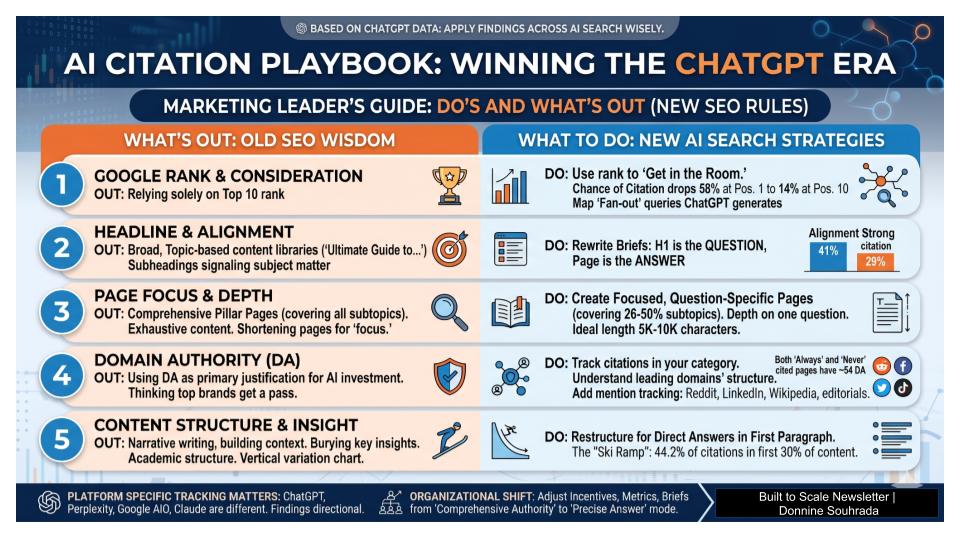

An executive brief on two independent, large-scale studies, one from AirOps and researcher Kevin Indig analyzing 815,000 query-page pairs, another from AirOps tracking 548,534 retrieved pages across 15,000 prompts, map what drives citation in AI-generated responses and what the data shows is not working.

I will caveat this to say, things are changing fast, and although this might be what the data is showing today, we as marketing leaders have to keep pace with how users are discovering and making decisions about your brand with the help or hindrance of AI search.

A NOTE ON SCOPE BEFORE YOU READ FURTHER

The primary research cited in this article was conducted on ChatGPT’s citation behavior specifically, not AI search broadly. ChatGPT, Perplexity, Google AI Overviews, and Claude each use different retrieval formulas. Findings from ChatGPT studies do not automatically transfer to other platforms. The directional principles: answer-first structure, heading alignment, focused pages, appear to hold across platforms based on emerging evidence, but the precise numbers are ChatGPT data. Apply accordingly. Sources cited.

Finding 1: Search ranking gets you in the room for AI consideration, but it does not mean you will be cited by AI.

Google rank is the entry fee. The citation goes to whoever best answers the specific question being asked.

In ChatGPT specifically, pages at position 1 give your page a 58% chance of being cited by ChatGPT. By position 10, that drops to 14%. So yes, everything you've done to earn organic rank still has real value. Organic search optimization is not a wasted investment.

But ranking only gets you into consideration. ChatGPT still evaluates what it finds and selects the best answer, which means a high-ranking page with weak content structure can lose the citation to a lower-ranking page that answers the question more directly.

There's a second problem that ranking can't solve. A third of all AI citations come from fan-out queries, internal follow-up searches ChatGPT runs to build a complete answer. Ninety-five percent of those queries have zero traditional search volume. They don't appear in your keyword tools. That means a significant share of your AI citation opportunity lives entirely outside what your current measurement stack can see and likely outside what any keyword-based strategy was built to capture.

What Marketers Should Do:

Run your highest-priority pages through ChatGPT and Perplexity with the exact questions your buyers ask.

Map the fan-out sub-queries ChatGPT generates for your most important prompts. Those are citation surfaces your keyword tools will never surface on their own.

Add AI citation rate to your performance dashboard alongside organic rank. Optimizing for rank alone is optimizing for the wrong finish line.

Sources: AirOps/Kevin Indig, 815,000 ChatGPT query-page pairs (Growth Memo, 2025) | AirOps, 548,534 pages / 15,000 ChatGPT prompts (ALM Corp, March 2026) | Ahrefs: ChatGPT vs. Perplexity citation overlap with Google

Finding 2: Headline matches to answering the user's exact question is the top on-page signal. Most content libraries are misaligned.

In the ChatGPT dataset, when a page’s headline closely matches the user’s question, the citation rate is 41%. When alignment is weak, it drops to 29%.

Content built around broad topic ownership, “The Ultimate Guide to B2B Demand Generation”, underperforms content built around the specific question a buyer is actually asking. Most enterprise content libraries are full of the former.

The heading has to answer the question, not just signal the subject matter.

Indig’s analysis of 6.8 million H2-H4 headings identified which subheading structures earn citation. The pattern is consistent: subheadings that directly match the fan-out queries ChatGPT generates internally are the ones selected. This likely reflects a broader principle that applies across AI search platforms, since all of them are trying to match content to questions.

WHAT MARKETERS SHOULD DO

Rewrite content briefs around the specific question being answered, not the topic being covered. The H1 should be the question; the page should be the answer.

Use ChatGPT to surface the fan-out sub-queries it generates for your most important prompts. Those sub-queries should become your subheadings.

Audit your top 20 content assets: does each heading directly answer a question a buyer would ask, or does it signal topic breadth? The latter is a citation liability in ChatGPT — and likely across AI platforms.

Source: Kevin Indig / AirOps, 815,000 ChatGPT query-page pairs (Growth Memo, 2025)

Finding 3: Focused pages outperform exhaustive ones, with one critical requirement.

In ChatGPT, pages covering 26–50% of a topic’s subtopics consistently outperform pages trying to cover everything. The classic pillar page, comprehensive, exhaustive, structured around broad topic ownership, actively works against you in this environment.

ChatGPT is selecting the best answer to a specific question, not the most thorough document on a general subject. Depth on a single question is rewarded. Shallow coverage of many questions is not.

For most organizations, the practical implication is to build focused, question-specific pages rather than competing on comprehensiveness. Importantly, “focused” does not mean short. Indig’s data shows longer pages earn more citations overall, with the biggest lift between 5,000 and 10,000 characters. The goal is depth on one question, not brevity.

WHAT MARKETERS SHOULD DO

Break broad pillar pages into focused, question-specific pages. Each page should be the best answer to one question, not an adequate answer to twenty.

For existing pillar pages with traffic, consider splitting rather than rebuilding. Create standalone pages for each major sub-question and use the pillar as a navigation hub.

Do not cut page length in pursuit of “focus.” Depth on a single question is rewarded. Shallow coverage of many questions is not.

Sources: Kevin Indig / AirOps, 815,000 ChatGPT query-page pairs (Growth Memo, 2025) | Search Engine Land: ChatGPT citations favor a small group of domains

Finding 4: Domain authority still matters, but not the way you think it does.

In the ChatGPT dataset, pages that were always cited and pages that were never cited had nearly identical domain authority scores, around 54. At the page level, domain authority did NOT predict citation. The brands that have invested heavily in traditional authority-building are not getting a free pass in ChatGPT.

WHAT MARKETERS SHOULD DO

Stop using domain authority as the primary justification for AI search investment. It gets you into the pool; it does not determine whether you get cited.

Identify the domains that dominate citation in your category on ChatGPT and Perplexity. Understand what they do differently at the page and content structure level.

Add brand mention tracking across Reddit, LinkedIn, Wikipedia, and relevant editorial sources. Earned mentions on high-trust platforms increasingly drive AI citation presence across platforms.

Sources: Kevin Indig / AirOps, 815,000 ChatGPT query-page pairs (Growth Memo, 2025) | Search Engine Land: ChatGPT citations favor a small group of domains

Finding 5: Where your key insight sits on the page determines whether ChatGPT finds it

Indig’s analysis of 18,012 verified ChatGPT citations found that 44.2% of all citations came from the first 30% of content — a pattern he describes as a “ski ramp” that held consistently across industries and validation batches.

The reason is structural. ChatGPT is trained heavily on journalism and academic writing that follows a bottom-line-up-front format. It selects content that surfaces clear entities, definitions, and direct answers early.

Narrative writing that builds context before delivering the point, which describes most long-form marketing content, is structurally disadvantaged.

The effect varies by vertical. Finance showed the steepest ramp, with 43.7% of citations in the first 30%. Healthcare and HR Tech were flatter. Education peaked later, around the 30–40% mark. But the directional principle held everywhere: the opening of your content does the heaviest citation lifting. If your key insight lives in paragraph five, ChatGPT may never reach it.

WHAT MARKETERS SHOULD DO

Restructure existing content so the direct answer appears in the first paragraph. If your key insight is buried after setup, move it to the top.

Treat the subheading and the sentence that immediately follows it as your primary citation surface. That’s where ChatGPT reads most heavily.

Revise your content brief template. If it instructs writers to build context before the payoff, that structure is costing you citations. AI citation rewards journalism structure, not academic structure.

Sources: Kevin Indig, 18,012 verified ChatGPT citations / 1.2M responses (Search Engine Land, Feb 2026) | Search Engine Land: ChatGPT citations favor a small group of domains

The Organizational Problem Underneath the Content Problem

Most marketing organizations aren’t structured to produce the kind of content that wins AI citations on any platform.

The incentive systems reward volume: publishing cadence, word count, and comprehensive topic coverage. The measurement systems reward traffic and rankings, not citation rate or AI visibility. The approval processes favor content that covers all bases over content that makes a specific, defensible claim at the top of the page.

These are not small adjustments. Shifting a content operation from “comprehensive authority” mode to “precise answer” mode requires changes in how briefs are written, how performance is measured, and often how teams are resourced.

The direct question for leadership: does our current content production system have the incentives and metrics to produce content that wins in AI search? In most organizations, the honest answer is no.

What We Know, What We Don’t, and Why It Still Matters Now

The research cited here is the most rigorous available, but the landscape is moving fast and the findings should be held with appropriate confidence. All primary data is from ChatGPT. Perplexity behaves differently, its citations align much more closely with Google’s top 10 results. Google AI Overviews and AI Mode use Google’s own index and selection logic. Claude has a different architecture again. Platform-specific tracking matters.

What holds across platforms, based on available evidence, is the directional logic:

Answer-first content structure

Heading alignment to actual buyer questions

Focused pages that go deep on one question are rewarded more than broad topic coverage.

The brands investing in understanding this now, across multiple platforms, not just one, will have a structural advantage when attribution tooling catches up. The ones waiting for the data to be perfect will spend years rebuilding the visibility they assumed they already owned.

Built to Scale Newsletter by Donnine Souhrada, is published for senior marketing and operations leaders. Donnine has extensive experience in marketing strategy and operations and translates trends to scale customer acquisition and retention growth.

All research citations link to original sources. Primary data in this article is from ChatGPT citation studies. Readers should evaluate applicability to other AI platforms independently.